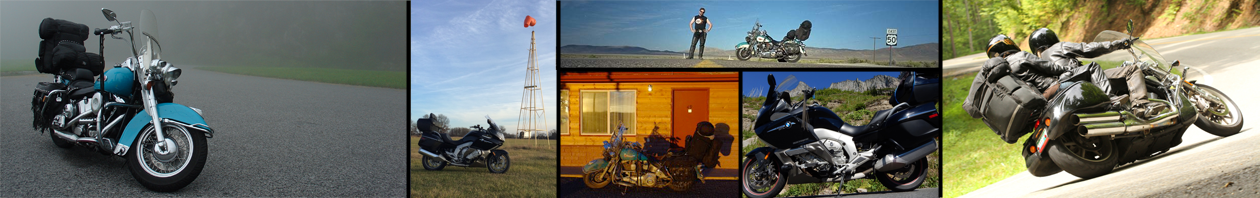

I was recently musing over how evolving technology has changed the way I publicize stories about my two-wheeled travels.

With this post, I get to channel my inner curmudgeon, the old man down the street that is forever telling the neighborhood kids to get off his damn lawn. So here goes…

When it comes to telling your friends about your latest motorcycle tour, you kids have it way to easy.

Back in the 1990s, we didn’t have a speedy wireless connection to internet, available from a small device that fits in your pocket, armed with a bevy of convenient applications to capture and share every sight, sound, smell(?), and story that you experience, all within 30 seconds of you having the original experience.

We had to work to tell our stories. But first, a history lesson.

The Internet was complicated

In the early 1990s, the Internet was already in it’s twenties but it still acted like an unruly teenager. The most common uses were:

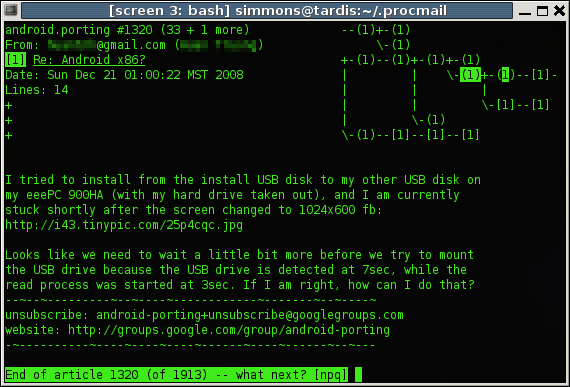

- Email (you know what that is)

- Usenet, which is a chat/discussion tool

- FTP, which stands for File Transfer Protocol

- World Wide Web (in it’s infancy)

Email – really simple and really nasty

Email was already very cool, but not many people had an actual internet-facing email account (more about that later). The problem was getting someone to give you an email account on their mail server. Most people that had an internet-facing email account had it through their company or university. The email software was often a command line interface running on a terminal window, though a few graphical interfaces for email were becoming available.

The messages themselves were text-only (no pretty formatting). Attaching an image to an email sent you spiraling down into a special brand of hell. Attachments needed to be encoded a special way (uuencode) so they could be injected into the text-only stream of an email message. The uuencode process, naturally, was another command-line tool that you had to run on your image before you tried to attach it to your email. Never mind that the size of each email message was severely limited, which often required you to uuencode the image and split it into bite-sized chunks that you could distribute as attachments across several email messages that you needed to number (1 of 4, 2 of 4, etc.). The email recipient had to save the multiple attachments, reassemble them (in the correct order) into a single uuencoded file, un-uuencode the file (yet another command line tool), and finally, they had an image file that they may be able to look at, unless the original image format was not common on their computer platform (Windows, Mac OS, Unix, etc.). For example, it was hard to look at a Windows .bmp file on a Mac.

Usenet – groups before Google or Yahoo

Email was nice but, for some people, Usenet was the killer application of the adolescent Internet. If you use Google Groups or Yahoo Groups now, you have a basic idea of need the Usenet filled. It was a seemingly infinite list of discussion forums, each with it’s own never-ending stream of topic threads. The name of each forum was a period-delimited string of subdivisions getting more specific with each additional term. For the motorcycle crowd, the rec.motorcycles was the place to be.

The story of how

rec.motorcycles.harleyfinally became available withinrec.motorcyclesis a lengthy one and worthy of several separate blog posts. Alas, I was fashionably late to that party so it is not appropriate for me to tell that tale.

Like email, each message in a given discussion thread was text-only. Also like email, attaching files (e.g., images) was just as complicated as it was for email, with the added joy of tracking down all of the “parts” of a given image. The way Usenet works is that each company or university that wanted to use Usenet hosted a Usenet server somewhere in their IT domain. Usenet users had accounts for a specific Usenet server, not for the service as a whole. When you post a message, it immediately becomes available on your “local” Usenet server. Your message is also added to a queue to be relayed to all the other Usenet servers around the world. Needless to say, some servers are better than others and not all messages get relayed everywhere. Consequently, it was not uncommon for you to try and view a friend’s photo of their new ride only to find that your Usenet server only had three of the required four messages to reassemble that image.

FTP – a protocol without a host

FTP (File Transfer Protocol) was, and continues to be, an wonderfully useful tool for moving discrete files from one location to another. In the mid-1990s, it was almost the only file-transfer game in town.

The problem with FTP was that, like any other internet facing service, you needed an account to use it. In short, you needed a company or university to give you an FTP account, which would give you the ability to post files to a given location, perhaps your own area, or perhaps an area shared with a bunch of other users. Once you posted a file to a given location, you could email your friends to go get it but only if they also had a FTP account on the same server.

Given these limitations, FTP was useful to transfer a files between individuals but not to groups. Consequently, most images shared with multiple friends were uuencoded, broken into pieces, and distributed as a series of Usenet messages to one of the alt.binaries.* newsgroups.

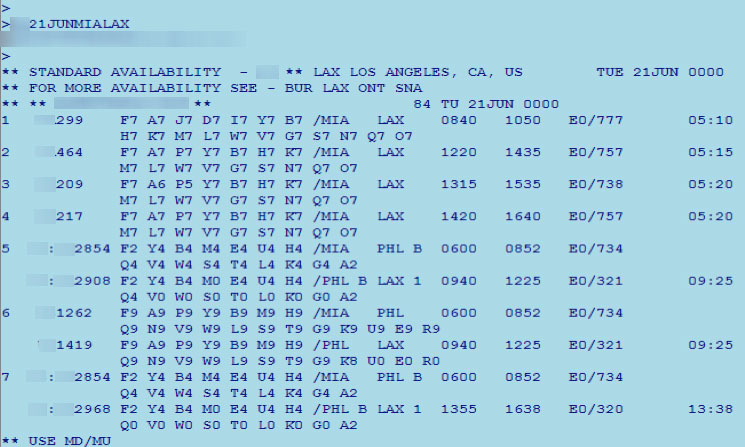

World Wide Web – a child with potential

In the early days of HTTP and the world wide web, the vast majority of active sites hosted reference content. Libraries of information were easy to publish to HTML and host. Sites that actually did something were primarily limited to search engines, MapQuest, and a few sites that let you interface with the Sabre flight reservation system which, at the time, was the pinnacle of cool. Screw the travel agent, I can search for my own damn flights!

Hosting your own content on the web was too complicated for most users. In a nutshell, you needed the following:

- You’d have to con your company or university into opening up the pub folder in your home directory to being publicly visible via HTTP. Unless you were an IT professional for a company and did this for yourself surreptitiously, most companies didn’t want personal content being hosted from a company domain.

- You’d need an FTP account to access the hosted directory and populate it with content.

- HTML editors didn’t exist yet, so you needed to know enough about HTML that you were comfortable editing the tagging by hand using a text editor.

- You needed to email your new web address to all your friends.

- You could send your web address to the search engine companies and see if they wanted to add you to their browsing hierarchy.

There was a time when the Ghost Cruises site was on the navigable hierarchy of links at Yahoo.com. It was under Leisure > Motorcycling > Travelogues.

Online Services – Internet for non-believers

I spite of the fact that the Internet of the early 1990s was not nearly ready for use by non-technical users like your Aunt Bessie, there were thousands of people communicating online through online services. The most popular online services were CompuServe, America Online (AOL) and Prodigy. For all practical purposes, online services were internet service providers before the internet became something compelling that everybody wanted to use.

Each online service provided you with an email address and gave you access to gobs of information, such as:

- Weather forecasts for anywhere in the country

- Worldwide news coverage

- Latest sports scores

- Dedicated groups for virtually any interest (a kinder, gentler, Usenet)

Each online service also provided a list of phone numbers that you could call with your modem to connect to the service, read your email, and consume their content. The challenge was finding a number in your local area so the call wasn’t a long distance call (remember long distance calls?). In a pinch, you could use a toll-free number but this often was time-limited or involved an extra charge above your normal monthly fee.

The ways that these online services competed was to expand their network of local access numbers (sounds a lot like today’s wireless phone providers expanding their network) and to add more content and offer it with more glitzy graphics. The challenge was to provide that content and glitz in a way that it was still consumable with a 19.2kbaud modem connection.

Most people access the internet today at download speeds of 3-12Mbs (Megabits/second). Back in the mid 1990s, a fast connection was anything above 19.2kbs (kilobits/second). Do the math. Can you say “slow”?

Today, the smartphone is the epitome of a “gotta-have-the-latest” product. We line up down the street to buy the latest iPhone or Samsung Galaxy. Back in the early 1990s, the “gotta-have-the-latest” product was the modem. The lines weren’t as long, but I guarantee you that each time the speed or compression improved for a given model, geeks flocked to the local big-box store and laid down their cash so they could upgrade from 19.2kbaud to 28.8kbaud or from V.32 to V.42bis.

To this day, I can still tell the difference between a 14.4kbaud connection and a 28.8kbaud connection just by hearing the modem tones at connection time. My name is Ghost and I’m a bloody geek.

Initially, each online service was its own little world. You could only interact with the content offered by your chosen service and you could only email other members of the same service. At the height of my own pre-real-internet use, I had accounts with both CompuServe (who had a better Sabre interface and a better model railroad forum) and America Online (who had a more glitzy interface, especially on the Mac OS, and had faster local access numbers).

As the unmoderated wild-west landscape of the internet became more useful, the online services were forced to break down some of their walls and give their users some access to the outside world. I distinctly remember the glee of discovering the undocumented feature that allowed me to send email from my America Online account to an external internet account, or even a CompuServe user. Soon thereafter, AOL debuted of a special browser window that had an web address field that you could set to anywhere! Oh, the freedom!

By the mid 1990s, the quality and breadth of available content on the internet had exploded. CNN, The Weather Channel, ESPN, IMDB, and Amazon constituted the tip of the iceberg of quality content and services. The parallel content hosted on the online services simply couldn’t compete. Eventually, much of the content that was originally presented in the online service’s client application became links to external web sites.

About this time, a new kind of company started appearing in the yellow pages: Internet Service Provider (or ISP). For a monthly fee similar to your favorite online service, they would provide you with an email account, access to an online directory to host your own web site, and a series of local access numbers for dialing into their service. The emergence of ISPs sounded the death knell for the old online services. Prodigy was shut down on October 1, 1999 though there is a small effort to resurrect its stored data as a reference archive. CompuServe was purchased by the owner of America Online in 1999. The original CompuServe Information Service, later rebranded as CompuServe Classic, was finally shut down on July 1, 2009. The newer version of the service, CompuServe 2000, continues to operate as an internet portal. America Online is, technically, still around but they are a mere shadow of their early 1990s grandeur.

Trip Reporting Before the Wireless Age

The first time I sent out daily trip reports during my motorcycle tours, the only people who cared about my scribblings were family members that had accounts with America Online (AOL). Consequently, all I had to do was connect to AOL, compose an email and send it. As simple as this sounds, it was not without its share of challenges. Later, as my prospective audience grew, it became more complicated to post, and later host, trip reports.

Whose computer do you use?

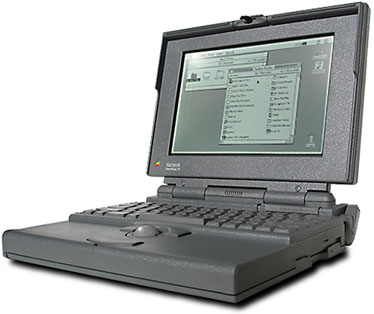

Back in the 1990s, we didn’t have Macbook Airs or sexy-slim tablets. The term laptop was practically brand new and most portable computers were considered merely lug-able.

My own beloved Powerbook 170 wasn’t horribly large but it still took up a lot of room in my scant motorcycle luggage. I also wasn’t overly confident that the hard drive and floppy drive mechanisms would react kindly to three weeks of bouncing around on the back of a motorcycle. In spite of these concerns and space compromises, a computer was an unavoidable evil because you had to run the online service’s client application on something if you hoped to get online and send/receive messages or check on weather.

For my first few motorcycle tours, I had yet to cultivate my nationwide network of friends who rode motorcycles and frequented the rec.motorcycles.harley usenet newsgroup. In addition, hotel chains had yet to realize that an available computer for customers was a marketable guest perk and WiFi was still several years off. These conditions severely limited my ability to find someone willing to let me use their computer. Consider the following scenario: a complete stranger dressed head-to-foot in black leather walks up and asks to use your home computer. Is your response:

- A – “No problem! Can you stay for dinner and meet my teenage daughters?”

- B – “Get lost or I’m calling the cops.”

My quick-and-dirty survey found that 99.6% of responders opted for response B. The 0.4% that chose response A admitted that they just wanted to lure the stranger into their home and feed him to their pit bull.

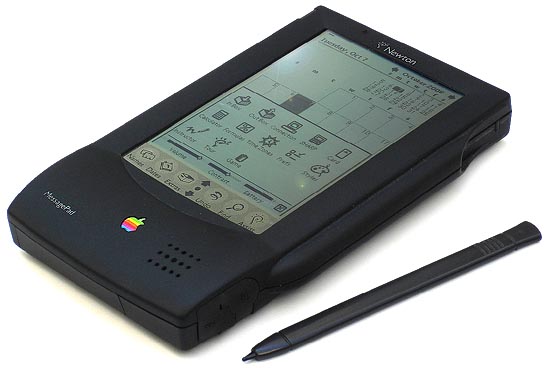

Unable to rely on the kindness of strangers, I resorted to my secret weapon: the Newton. From 1993 to 1998, Apple dabbled in the notion that you could do real work with a sub-laptop type of computer that looked like a small, thick, tablet. I owned three of them over the years and found them very well suited to my motorcycle tour computing needs.

My Newton(s) had:

- Data entry in the form of handwriting recognition or a small wired keyboard.

- An external 2400 baud modem that connected to the serial port. (Later models had an internal PCMCIA (PC card) slot for the modem, and those modems had up to 28.8kbaud speed.)

- A third-party America Online client application (Aloha) with access to AOL email and other basic services.

- A vehicle mileage/service database application called MPG, which worked beautifully for logging gas stops, service intervals, and trip distances.

- Word processing application for writing trip reports.

Armed with this device, I was fully equipped to write up the day’s events and send them to any email recipient that was interested. All I needed was a connection…

Getting online in the boondocks

All of the old online services, and subsequent early internet service providers, required a phone line to establish a connection between your computer’s modem and their network. As mentioned earlier, each online service had an extensive list of local phone numbers that users could call to get connected. I had saved the list for America Online to my Newton so I could refer to it to find access numbers wherever I went. The inconvenient truth was that most rural locales do not have a designated access number, leaving many of my favorite western destinations well off the beaten path of online connectivity. Online services also had toll-free numbers that you could call from anywhere in the country, but they often required a surcharge on your account or were limited to a few minutes per connection.

I the late 1990s, the newly appearing local ISPs tended to have good coverage for their local area (usually a handful of counties in a given state) and nothing beyond that. Like the online services, they often had a toll-free number but it came at a premium.

Ultimately, I found that the most reasonable strategy was to buy a long distance phone card and, if there was no local number available, program the modem connection software to dial the card number, provide my access code and PIN, and then dial the local access number that I usually used from home. To reduce the cost of getting online, the typical communication session went something like the following:

- Use my word processing application to write the trip report(s) or message(s) that I want to send.

- Establish a connection, send the prepared trip report(s) and/or message(s) to their recipients, receive any new email, and disconnect.

- Read the new email messages and, if necessary, compose replies.

- Establish a second connection, send the necessary replies.

- Access a web site for the weather forecast.

- If necessary, access MapQuest for any route planning for the next day.

- Disconnect.

The minutes on the phone card usually cost about 5-10 cents per minute, which meant a full communication session to send/receive email and check tomorrow’s weather and route usually cost less than a dollar. But this entire process depended on my ability to use someone’s phone line.

Who’s phone line can you use?

When traveling the rural expanse of the US and Canada, getting a total stranger to let you use their phone is a challenge. They know you’re not a local and, therefore, are very likely dialing a long distance number. Even if you promise that the call is toll-free, they still treat you with a wariness (or open contempt) usually reserved for door-to-door salesmen.

To make matters worse, you spice up the scenario because you’re not actually being interested in the phone itself. Instead, you want to hook up some newfangled device to the phone port, which could be doing god-knows-what over their phone line. To the computer illiterate, you might very well be wiring drug money to Colombia, disconnecting them from the power company, or installing a phone tap. For all of these reasons, I found that stopping at a nice farm house to get online had about the same likelihood of success as winning the lotto.

After some trial and error, I found that my best chance of getting a connection was at a city/town hall, a police station, or a place of business. The added challenge to using these candidates was that they tended to have proprietary phone systems where each available phone port used a special protocol (for example, PBX) to communicate with the phone system, which then connected that call with the outside world. In short, these systems wouldn’t understand the tones generated by my trusty modem. I needed a direct connection to a traditional phone line.

Luckily, there were two common office devices that needed a dedicated direct connection to an outside phone line: fax machines and credit card readers. While fax machines aren’t as popular now, nearly every government building or place of business in the 1990s had one. My strategy was to walk into the establishment, ask if they had a fax machine that I could use, convince them that it wouldn’t be a toll call, and proceed to disconnect the line from the fax machine and connect my Newton instead. Over the years, I successfully used this strategy at government buildings, auto parts stores, department stores, campground offices, gas stations, and one hospital.

If the only thing you could find was a PBX phone line, you weren’t completely out of luck. If you could disassemble the handset and get access to the mouthpiece, you could use a set of alligator clips to connect your modem to the microphone connectors on the handset. For this explicit purpose, I carried one of the following gizmos in my Newton case.

The closet hacker in me is proud to say that I successfully used this connection method so often that I wore out two of these devices before I retired my Newton.

Distribution challenges

As mentioned earlier, communicating my progress during my first few motorcycle trips merely required access to America Online email. However, as I became more involved in the rec.motorcycles.harley usenet group it became necessary to post these reports to email and usenet messages.

Since I didn’t have a usenet reader application for my Newton, I had to rely on the kindness of my online compatriots to fill the gap in my mobile computing solution. I would email daily trip reports to my designated poster and they would subsequently post a message to the usenet group with my content. This solution was far from perfect, often falling prey to problems with message encoding or line ending handling. In spite of these limitations, I used these “human proxies” for several years.

Putting it all together

From 1994-2000, I regularly hit the open road armed with the following mobile computing toolkit:

- Apple Newton, with the following software:

- A word processing application

- An application for connecting to America Online and through to the real internet

- A terminal program for running a UNIX shell on the servers at my place of work

- Various phone cables and connectors

- My microphone tap with alligator clips

Along with this toolkit, I had my strategy for borrowing phone lines to get a connection and my designated human proxy to repost my emails as usenet messages. With this (admittedly horribly complicated) process, I was able to do a fair amount of real “mobile” computing before cell phone networks were truly ubiquitous.

Discovering an audience

My favorite highlight from this era was posting trip reports as I headed out west for the first Run-to-the-Sun trip (1997). I was taking a lazy back-road route and sent emails containing trip reports for Day One and Day Two. Day three of the trip I spent visiting my old haunts from my college days on the Keweenaw Peninsula. Since I hadn’t moved my camp and the details of the day were more personal, I wrote up a summary of the day but didn’t send it to anybody. The following day, I resumed my normal posting and sent out a report for Day Four. The morning after day five, I was using a fax line at a Jamestown, ND pharmacy to post the Day Five report when I was inundated with 14 separate emails asking what happened to the Day Three report.

What a pleasant surprise it is for a writer to find that they do actually have an audience.

Only nerds will think this is cool

My most complex use of this early mobile computing platform came during a ride to visit friends at the 1998 Meeting of the Marked convention in Pittsburgh, PA. My schedule didn’t look like it was going to allow for me to attend but a last-minute favor from a dog sitter opened the door of opportunity and I packed up the Bike and left, excited that I would surprise my friends with an impromptu appearance. Unfortunately, my address for my friend in the area was out of date and I suddenly had nobody to contact and ride with to the hotel hosting the event. Which hotel? I had no idea.

Arrgh!

As it was evening, most of the places where I could borrow a phone line were closed, except for a Blockbuster Video store (remember when we would rent video tapes to watch them?). Using the phone line connected to their credit card machine, I was able to get online. I proceeded to:

- Use an early white pages website to perform reverse look-up using my friend’s phone number to obtain her current address.

- Use MapQuest to figure out where that address was located.

- Use my terminal application to log into the my UNIX account at work. I then used the

grepcommand line tool to search the file for my deleted emails to find the name of the hotel hosting the convention.

My friend never did show up at home that night (she was spending the night at the hotel, who knew?) and the hotel was booked for the evening, so I ended up at another hotel for night. I met up with everybody for breakfast the next day.

Hosting instead of posting

In the spring of 1999 I decided that it was better to host my trip reports in HTML than to post them as text-only usenet messages. This opened up the possibility of adding the text of the trip reports while on the road and then adding supporting photos when I got back home. (I was not using a digital camera yet, so uploading photos on the road was not yet feasible.)

For home maintenance of the HTML for the original GhostCruises site, I used a commercial tool called Adobe PageMill. Since there was no HTML editor or site maintenance tools for the Apple Newton, which I continued to use until 2003, my only recourse for on-the-road trip reporting was to use the following strategy:

- Create placeholder HTML files and supporting navigation links for the daily trip reports for an entire trip before leaving home.

- Use the FTP client and word processing applications on the Newton to add content to the placeholder HTML files and post them back to the live web site.

- With each trip report update, send a link to the new page to the

rec.motorcycles.harleyusenet group and the Harley Email Digest email list. - Add photos and refine the new content when I return home.

The complications of hosting photos

This strategy was effective but had the disadvantage that most readers would read the trip reports before any supporting images were added. This limitation seems nonsensical today but back in the mid-1990s digital cameras were bulky, when through batteries faster than a radio-controlled dune buggy, and had limited resolution. I found that the best image quality was obtained by taking photos on 35mm film with a small non-SLR camera and having them digitized when I processed the film. My favorite process was the Kodak PhotoCD process, which scanned the developed negatives and yielded sharp images at 3072 x 2048 pixels of resolution.

Aside: The PhotoCD resolution was about the equivalent of an image from a 6 megapixel digital camera. In 2003 I finally migrated to 5 megapixel camera, the Sony DSC-V1, which captured images at a maximum resolution of 2592 x 1944 pixels–that was close enough to the PhotoCD resolution to tip the scales for me.

When I picked up my developed photos, I’d also receive a PhotoCD containing the digitized photos that I could incorporate into a nice gallery on my web site. While a cohesive gallery for a trip was nice, I also liked to incorporate some of the photos into the trip report text. Readers that followed along with the trip would consume the text while I was on the road and then decide whether they wanted to look through the same content again once I added the images. This was certainly better than having the trip reports live on as text-only usenet messages but it wasn’t the most streamlined experience for the reader.

Enter the smartphone

In 2002 I migrated from the Newton platform (killed by Apple in 1998) to a Palm-based personal digital assistant (PDA); the Handspring Visor Deluxe. By itself, the Visor Deluxe had no wireless networking capabilities (other than in IR port for short-distance “beaming” of small pieces of information). The Visor Deluxe did, however, have a Springboard slot for adding accessories like additional memory, a GPS receiver, or (drumroll please) a VisorPhone.

The VisorPhone module was a a GSM radio, a speaker, an extra battery, and an antenna. When you slid the VisorPhone into the Visor Deluxe’s Springboard slot, it integrated with the contact list and internet tools (for example, email, FTP, web browsing, etc.) to give you the very first convergence of a PDA and a cell phone. This was the debut of the smartphone and it was a good thing.

While the cell phone support greatly simplified the process of getting online, the data connection was slow and the network (I used T-Mobile at the time) didn’t cover some of the more remote areas of the western US. (Keep in mind that these early smartphones couldn’t access Wi-Fi networks, which were not yet common.)

Even with the addition of an HTML editor on the PalmOS, there still wasn’t a camera on the device and I still had to edit placeholder HTML files that I created before I left on a trip.

Smartphone evolution improves photo handling

I used the VisorPhone for two riding seasons (2002-2003). In late 2003 Handspring debuted the Treo 600 and I immediately upgraded.

The Treo 600 added two important features that enhanced my trip reporting capabilities:

- An on-board camera.

- An SD card slot.

While the on-board camera took fairly dreadful photos, it did mark the first time that I could take a photo and immediately send it in an email or upload it to my website. Since the SD card slot happened to match the storage card in my digital camera, I now had a new workflow available for handling photos in trip reports:

- Take a photo with my digital camera.

- Remove the SD storage card from the camera and insert it into the Treo.

- Use my FTP application to upload the photo to my website.

- Use my HTML editor to include the new photo in a trip report.

This was a bit cumbersome but it marked the first time that I could publish a trip report with photos included immediately. My old process for adding them later was happily retired.

Geotagging

Geotagging is the process of adding location information to the metadata saved with a given digital image. Any given photo saved with a digital camera knows when it was taken; add geotagging and now the photo also knows where it was taken. This opens up the possibility of placing photo tic-marks on a map and clicking them to display the corresponding photos in that location. In 2009, the photo management tool that I used, iPhoto, added a Places feature that gave you a world map with your photos on it. It was so cool that I vowed to geotag all of the photos (1000s of them) in my photo library.

Since retroactively geotagging your photos after you take them is a bit tedious (I learned this quickly), capturing location information while you take the photos is very important. Suddenly I needed a GPS unit.

Today, most smartphones have an on-board GPS and geotag the photos you take with the on-board camera. If you have photographic requirements that exceed the capability of your smartphone, many digital cameras also have an on-board GPS and can geotag images as you take them. Unfortunately, back in 2009, I didn’t have a smartphone with a GPS and digital cameras with a GPS were all top-of-the-line units that I didn’t need and couldn’t afford.

Enter the GPS data logger.

A GPS data logger is, simply stated, a traditional GPS unit without a display. All it does is save your position or a track of your position over time. When riding across the country, the data logger provides an accurate track of exactly where you went, including embarrassing details like where you had to backtrack after a wrong turn. I used the track files from the data logger for the following purposes:

- Plot my track on a map for inclusion on my trip reports.

- Geotag my photos.

“But wait”, you say to yourself, “your data logger and digital camera are two different devices. How to they integrate with each other.” The quick answer is: “They don’t.” But like anything, there is a process you can follow at the end of your trip to make it happen.

- Import your photos from your digital camera to your computer.

- Import the track file from your GPS data logger to your computer.

- Use a tool like GPSBabel to convert your track file(s) to a compatible format, filtering the data (if necessary) and combining individual tracks into a single track for the entire trip.

- Use a tool like PhotoLinker to match the date/time of your photos with the corresponding data/time on your GPS track and add that location to the metadata on the photos.

iPhone sends datalogger to retirement

When I finally purchased an iPhone (iPhone 4 to be exact) in 2010, it came with an on-board GPS. I use the iPhone app Trails to capture track files and have retired the separate GPS datalogger.

GPS-enabled digital camera

During this riding season (2015) I somehow lost my beloved Canon SX100IS and was forced to replace it. I used this opportunity(?) to purchase a camera (Nikon Coolpix S9900) that had a built-in GPS so I wouldn’t have to run through the post-trip geotagging process. I still use the Trails app on the iPhone to capture track information, but I only use that information for mapping now.

Blogging and Facebook — it’s all about now

In it’s infancy as a publicly consumed resource, the internet was all about reference material–libraries of static data. As it evolved, the internet became more and more about currency–what’s happening right now. Two of the technologies that strongly influenced that trend are blogging and social networks.

Migrating Ghost Cruises to a blog

In terms of hosting your own content on the internet, there are very few of us (other than major corporations) that “roll their own” websites anymore. In spite of the growth in internet use, including everybody from small children to grandparents, most internet users don’t know the first thing about how any of the services/sites that they use actually work. They lack the knowledge to craft their own website. Those of us who have the knowledge often don’t have the time to spend hours writing HTML code and negotiating with tools like Dreamweaver to get exactly what you want. Weblog services (blogs for short) changed all that.

Most early blogs were service oriented. They provided a person with a way to publish content without having to manage a web site. Instead of buying a web space, authoring a bunch of HTML, and updating it periodically, the blog service simply gave you an account and hosted whatever you posted. Where traditional web developers used HTML editors, the blog services provided an online content editor that acted like a word processor and your published content was live immediately without the need for FTP transfers. Readers who liked what you were posting could subscribe to your blog and receive a notification (usually via email) whenever you posted something new.

While there are several blogging services available, the one that caught my eye was WordPress (it’s also the most popular). It is available as a service but it was also available as an installed application on your web space (as long as your webspace had access to PHP and an SQL database (most ISPs provide these resources). Since the blog application and all of it’s content is hosted online and edited/managed via the online application, it’s available from everywhere. This is very convenient when posting trip reports from any computer at a friend’s house or a hotel lobby. While you can browse to the blog site on a smartphone, the WordPress team created an iOS app that provides handy phone/tablet access to your blog.

Given the kind of reference (packing lists), episodic (trip reports) and anecdotal (philosophical ramblings) content that I tend to publish, a blog made perfect sense for me. I started migrating my old Dreamweaver site to WordPress in 2011 (and it’s still an ongoing process).

Getting sucked into Facebook

It seems strange to write a paragraph or two describing what a social network is. Everybody already knows this, right? The concept is simple. Take the publishing concepts behind a blog and simplify it–keep the user’s posts short and sweet but retain the ability to add links and photos. Then let all the users choose who they want to associate with, creating a network of friends that automatically get to see the stuff they post.

On the surface, a social network is a rather handy resource. Something significant happens in your life (a new job, a new bike, a breakup with a significant other, or a simple visit to the local ice cream joint) and you can post it and immediately notify your network of friends without having to send out a few dozen emails or make a bunch of phone calls. Conversely, the network keeps you informed of what the friends in your network are posting so you can keep up with what’s happening in their lives as well.

However, much like the Wizard of Oz, the real story (in this case, the business model) for the social network is behind the curtains. The interconnections between friends and the things their posting and linking to constitute a very marketable collection of data. This data that can be pure gold for advertisers, campaign managers, religious groups, etc. Cognizant of how social network companies make their money, I resisted the temptation and stayed off the most popular social network (Facebook) for quite a while.

Eventually, peer pressure got the best of me (Fins, I’m talking about you). Riding buddies from the west coast were riding through the midwest and were going to stop by for a visit but didn’t know when they’d be arriving. I gave them my email address and phone numbers but they insisted that I could be fully informed if I followed their postings on Facebook. I begrudgingly agreed and created my Facebook account. Naturally, the powers-that-be at Facebook couldn’t conceive of a parent that would curse their child with a name like Ghost, so I was forced to use my real name instead of the nickname that everybody I ride with knows (and loves?).

Linking the blog to Facebook

I’m not popular enough that people subscribe to my site independently (at publish time I only had four subscribers). Hell, most of my friends probably don’t even know the URL. Many of my riding friends are, however, on Facebook. Lucky for me, the WordPress team already thought of how to link the two.

One of the features of the JetPack set of plugins for WordPress is the Publicize feature. In short, you link your blog to a Facebook account and every time you post something new, a corresponding status update appears in your Facebook timeline with a link to the new post.

Full circle: posting and hosting simultaneously

All of this leads to where my road blogging efforts are today.

- I have a self-hosted WordPress blog.

- I can update the blog from any computer with a network connection.

- I can update the blog from the WordPress app on my smartphone or tablet (as long as I have service or wifi access).

- Since my content is self-hosted, I still own it.

- My photos are geotagged immediately on both of my cameras (iPhone and Nikon).

- My friends can live vicariously through my daring exploits like crisscrossing the country on a motorcycle.

It all seems so effortless; all I have to do it write. It also seems very far removed from the old days of command-line email, usenet, and online services.

Epilog

While the Harley Email Digest is long dead, the rec.motorcycles.harley (RMH) newsgroup is still alive, albeit a mere shadow of its former self with only a few of the regulars still using it. Reading RMH now is a bit like watching a few locals wander through the fairgrounds the day after the carnival left.